Artificial Intelligence has changed the way we search, write, analyze, and automate tasks. From chatbots to content generation tools, Large Language Models (LLMs) like OpenAI’s ChatGPT and Google’s Gemini are now part of daily business operations.

But there’s one major issue: LLM hallucinations.

If you’ve ever seen an AI confidently give a wrong answer, invent facts, or cite fake sources — that’s hallucination.

So how do we fix it?

The answer is RAG – Retrieval-Augmented Generation.

What Are LLM Hallucinations?

LLM hallucinations are moments when an AI gives you an answer that sounds perfectly correct, but is actually wrong or completely made up.

Think of it like this: AI tools such as ChatGPT created by OpenAI don’t truly “know” facts the way humans do. They don’t think, research, or verify information in real time. Instead, they predict the most likely next word based on patterns they learned during training.

Sometimes, when the AI doesn’t have enough information or isn’t sure about something, it still tries to give a smooth and confident answer. In doing so, it may:

- Make up facts or numbers

- Create fake sources

- Provide outdated details

- Fill in gaps with incorrect assumptions

It’s similar to a student guessing an answer in an exam — sounding confident but not being accurate.

So, an LLM hallucination isn’t intentional lying. It’s simply the AI trying its best to respond, even when it doesn’t truly know the answer

Why Do LLMs Hallucinate?

Large Language Models (LLMs) can sometimes provide information that sounds correct but is actually inaccurate. This happens because these models are designed to generate text based on patterns, not to verify facts. For example, tools like ChatGPT developed by OpenAI create responses by predicting what words are most likely to come next in a sentence.

There are a few simple reasons why hallucinations occur:

- Pattern-Based Generation: LLMs focus on predicting words rather than checking if the information is true.

- Limited Knowledge: They only know information that existed in their training data.

- No Built-in Fact Checking: Without access to external sources, the model cannot confirm details.

- Unclear Questions: If a question lacks context, the AI may guess the missing information.

In simple terms, hallucinations happen because AI tries to produce a helpful answer even when it doesn’t have complete or verified information.

What Is RAG (Retrieval-Augmented Generation)?

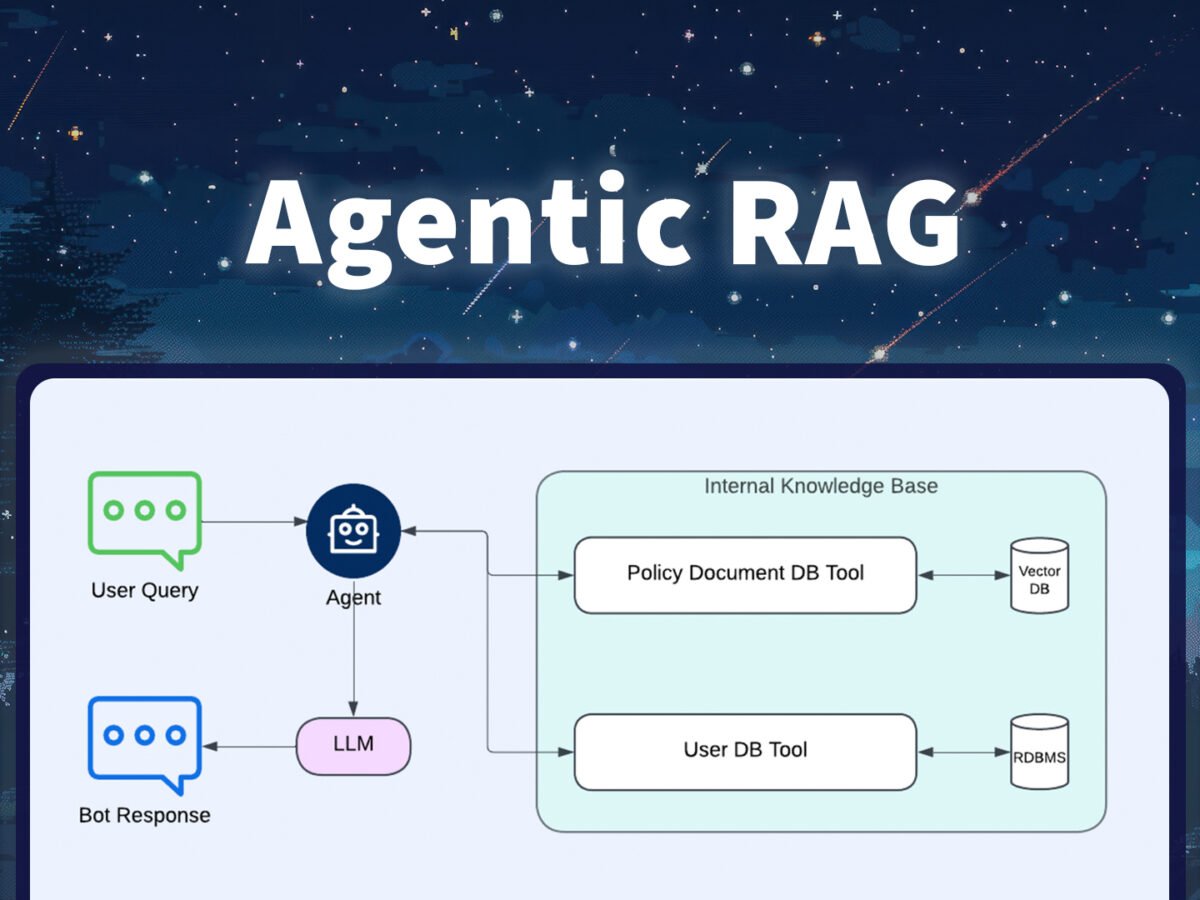

Retrieval-Augmented Generation (RAG) is an advanced AI technique that improves the accuracy and reliability of AI-generated answers by combining information retrieval with language generation.

In simple terms, instead of relying only on pre-trained knowledge, a RAG-powered system first fetches relevant, real-world data and then uses it to generate a response.

AI models like ChatGPT by OpenAI are powerful, but on their own, they generate answers based on patterns learned during training. This can sometimes lead to outdated or incorrect responses. RAG solves this by grounding the model in external, trusted data sources.

How RAG Works?

RAG works by combining search + AI generation so the model answers using real information instead of guessing.

AI systems like ChatGPT by OpenAI become more accurate when powered by RAG because they don’t rely only on memory — they first retrieve data.

Here’s how it works in a simple way:

1. User asks a question

Everything starts with a query.

Example: “What are the latest export compliance rules?”

2. System searches for relevant information

Instead of answering immediately, the system looks into:

- Documents

- PDFs

- Databases

- Knowledge bases

3. Relevant data is retrieved

The system picks only the most useful and relevant information related to the question.

4. AI generates the answer

The language model uses this retrieved data as context and creates a response based on real information, not assumptions.

In simple terms: RAG = Search first → Then answer

RAG vs Traditional LLM

Understanding the difference between these two approaches is important.

| Feature | Traditional LLM | RAG |

|---|---|---|

| Knowledge source | Training data only | Training data + external documents |

| Real-time updates | Not available | Available |

| Hallucination risk | Higher | Lower |

| Enterprise reliability | Limited | More reliable |

| Fact verification | Weak | Stronger |

Traditional LLM: A smart storyteller

RAG system: A smart researcher who checks sources before answering